Improving human-AI chatbot interactions on health should be a research and health leadership priority

Summary: Growing use of AI chatbots for health is already shaping care-seeking and self-care, creating both opportunities and real risks from confusing, incorrect, or poorly used advice. Health leaders and funders should treat safe, effective human–chatbot interaction as a population-level priority now, investing in public guidance, point-of-care "teaching moments," and implementation strategies that help people use AI chatbots safely and well. This insight brief was informed by a recent Bluemont Health Consulting LLC survey of 400 U.S. adults about their AI chatbot use for health questions.1,2

About one-third of US adults are using AI chatbots for health questions,3 about double from a year ago,4 and a large majority are positive about their experience.3,5

- AI chatbot use is driving care-seeking behavior, lifestyle choices, and self-help.1,3,6

- AI use could either increase or decrease health care utilization and cost: the net effect on utilization and cost is unknown.

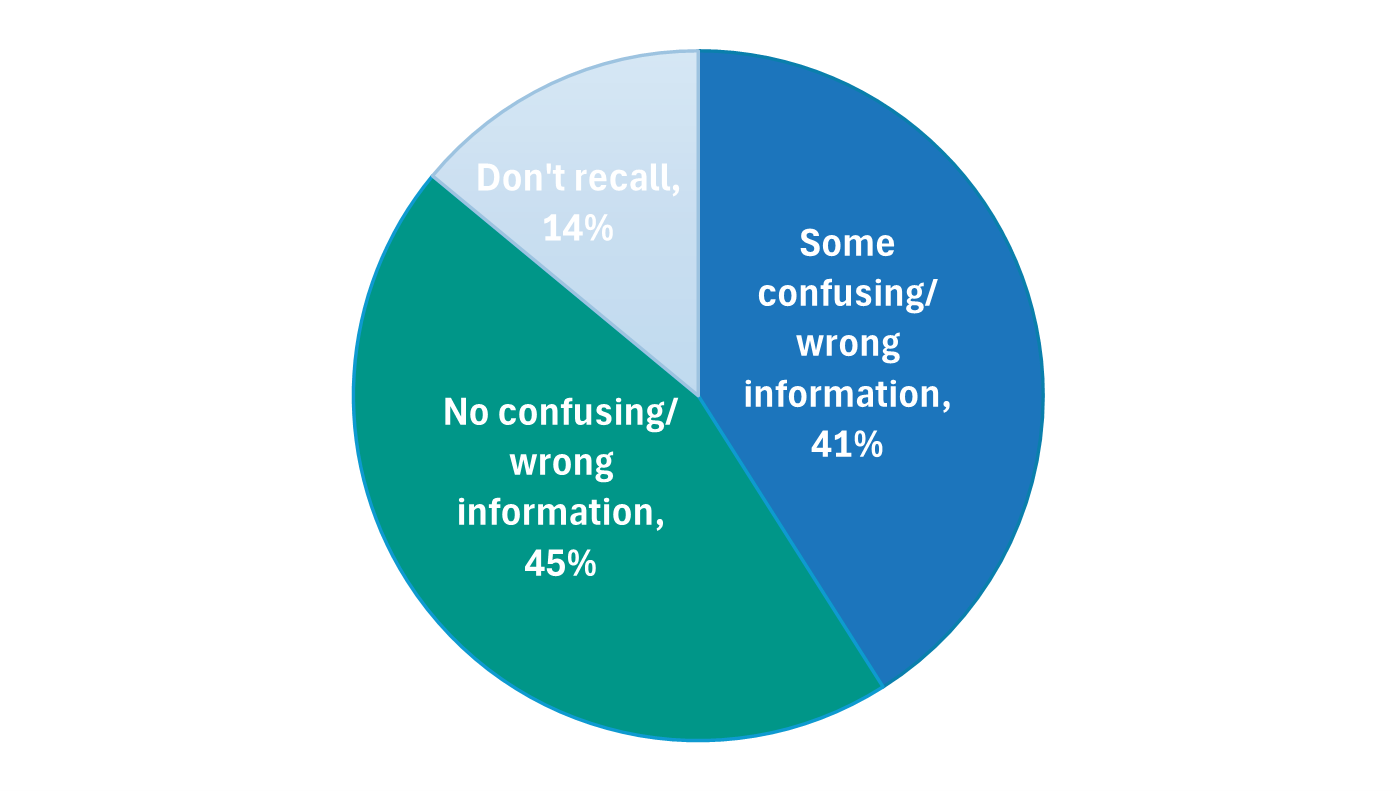

Despite overall favorable views, many people recall receiving some incorrect or confusing information, and they are concerned about privacy of their information.3,7

- Stories of harm stemming from chatbots and research on potential for harm tell us that some harm occurs,8,9,10 although people's overall opinions are favorable.

- IT experts are iterating toward chatbot models for health that are more accurate, provide answers that are easier to understand, and are protective of the privacy of health information.11,12

- ChatGPT Health (OpenAI)

- Copilot Health (Microsoft)

- Health AI (Amazon)

- Claude for Healthcare (Anthropic)

Researchers, clinicians, patients, and other health care organization leaders should work together to improve human-chatbot interaction.

- Research suggests that human-chatbot interactions are currently less accurate than chatbot-only performance.13,14

- Unless we improve how people are using the chatbots, even the best future models won't reach their potential, and people could be harmed unnecessarily.

"Despite LLMs alone having high proficiency in the task, the combination of LLMs and human users was no better than the control group in assessing clinical acuity, and worse at identifying relevant conditions."

- Bean et al. 202613"We were surprised to find that adding a human physician to the mix actually reduced diagnostic accuracy…"

- UVA Health Newsroom 202414Three research directions to consider:

(1) Develop robust communication strategies on tips for safe and effective use of AI chatbots for health.

- This includes crafting the messages, personalizing them to an individual's needs or interests, finding ways to effectively deliver them, and keeping messaging up to date as the models, known risks, and opportunities change.

Rationale: The problem of faulty human-chatbot interactions is a population-level problem, deserving of population-level attention. Lessons learned from past public health campaigns would provide valuable starting points. It will be important to consider variations in how younger as well as older adults absorb information.

(2) Support clinicians to take advantage of teaching moments at the point of care.

- Research could identify best practices clinicians could use to respond to incorrect or suboptimal information patients raise at a visit. This could involve printing out tips or sharing a QR code for tips on safe and effective use of AI chatbots.

Rationale: Use of AI chatbots has added a complicating factor to medical visits when clinicians have to deal with incorrect or suboptimal information during a visit. Some, perhaps over 40%, are currently dismissing patient-provided information from chatbots without explanation, in essence missing a chance to improve the patient's chatbot use going forward.15

(3) Design approaches that consider the full range of how people use chatbots for health questions, as well as population and individual differences in how people will learn.

Rationale: The many ways people are using chatbots for health suggest that a one-size-fits-all approach to improving use is unlikely to work. Influencing behavior on a large scale is challenging and will require drawing on adult learning theory, implementation science, cultural competency, and public health campaign marketing principles.

End Notes

1Felt-Lisk, S. and Kovac, M.D. Using AI chatbots for health questions: The who, what, and why. Bluemont Health Consulting LLC: February 2026.

https://static1.squarespace.com/static/6862ec7b01857d1675e33728/t/6997e9139170c871326d1e63/1771563283110/Using+AI+Chatbots+for+Health+-+The+Who_What_and_Why+2-9-2026-v2-final+%285%29.pdf

2Kovac, M.D., and Felt-Lisk S. Using AI chatbots for health questions: Survey methodology. Bluemont Health Consulting LLC: February 2026.

https://static1.squarespace.com/static/6862ec7b01857d1675e33728/t/6997daa7cf79e356589308a7/1771559591886/Using+AI+Chatbots+for+Health+Survey+Methodology+%282%29.pdf

3Montero, Alex, M., Montalvo, H., Kearney A., Valdes I., Kirzinger A., and Hamel L. KFF. Tracking Poll on Health Information and Trust: Use of AI For Health Information and Advice. Mar 25, 2026.

https://www.kff.org/public-opinion/kff-tracking-poll-on-health-information-and-trust-use-of-ai-for-health-information-and-advice/

4Holzwarth, M., Nagappan A., Knowles M. The tortoise and the hare of care: Health AI insights from Rock Health's 2025 consumer adoption survey. March 23, 2026.

https://rockhealth.com/insights/the-tortoise-and-the-hare-of-care-health-ai-insights-from-rock-healths-2025-consumer-adoption-survey/

5Author's analysis of Bluemont Health Consulting LLC's survey Using AI Chatbots for Health Questions. End-note 2 links to the methodology and survey instrument.

6Si, Y., Meng Y., Chen X., An, R., et al. Quality safety and disparity of an AI chatbot in managing chronic diseases: simulated patient experiment. NPJ Digit Med (Sep 2025) 8:574 doi: 10.1038/s41746-025-01956-w

https://pmc.ncbi.nlm.nih.gov/articles/PMC12462510/

7Author's analysis of Bluemont Health Consulting LLC's survey Using AI Chatbots for Health Questions. 41% of respondents using an AI chatbot for health questions recalled receiving confusing or incorrect information, and 68% were concerned about privacy (45% somewhat concerned and 23% very concerned). End-note 2 links to the methodology and survey instrument.

8Omar, M., Sorin, V., Wieler, L.H., Charney, A.W., Kovatch, P., Horowitz, C.R. et al. Mapping the susceptibility of large language models to medical misinformation across clinical notes and social media: a cross-sectional benchmarking analysis. The Lancet Digital Health, vol. 8, January 2026.

https://www.thelancet.com/journals/landig/article/PIIS2589-7500(25)00131-1/fulltext

9Ramaswamy, A., Tyagi A., Hugo H., et al. ChatGPT Health performance in a structured test of triage recommendations. Nat Med (2026).

https://doi.org/10.1038/s41591-026-04297-7

10Andrikyan W., Sametinger S.M., Kosfeld F., et al. Artificial intelligence-powered chatbots in search engines: a cross-sectional study on the quality and risks of drug information for patients. BMJ Qual Saf. 2025 Jan 28;34(2):100-109. doi: 10.1136/bmjqs-2024-017476.

https://pmc.ncbi.nlm.nih.gov/articles/PMC11874309/

11Flinn, R. Dr. ChatGPT will see you now. WIRED, July 10, 2025.

https://www.wired.com/story/dr-chatgpt-will-see-you-now-artificial-intelligence-llms-openai-health-diagnoses/

12Huckins, G. There are more AI health tools than ever – but how well do they work? MIT Management Executive Education, March 30, 2026.

https://www.technologyreview.com/2026/03/30/1134795/there-are-more-ai-health-tools-than-ever-but-how-well-do-they-work/

13Bean, A.M., Payne, R.E., Parsons, G. et al. Reliability of LLMs as medical assistants for the general public: a randomized preregistered study. Nat Med 32, 609–615 (2026).

https://doi.org/10.1038/s41591-025-04074-y

14UVA Health Newsroom. Does AI improve doctors' diagnoses? Study finds out. November 13, 2024.

https://www.uvahealth.com/news/does-ai-improve-doctors-diagnoses-study-finds-out/

15Author's analysis of Bluemont Health Consulting LLC's survey Using AI Chatbots for Health Questions. While 71% of those who followed up with their doctor or health care provider regarding the AI chatbot's advice (n=100) reported the health care provider agreed partially or wholly with the chatbot, 12% reported the health care provider dismissed the information they had shared with little or no explanation. End-note 2 links to the methodology and survey instrument.